In 2023, return on investment and clinical validation of a technology’s platform will be greatest indicators of a digital health company’s success (GSR Ventures, 2023). Recent efforts to examine clinical robustness in digital health companies find that many have in fact low levels of robustness, as measured by regulatory data, clinical trials registrations, and clinical, economic, and engagement claims (Day, Shah, Kaganoff, Powelson & Mathews, 2022).

A strong research function is critical for validating products and services. Conducting behavioral research for Lirio means I get to play a formative role in shaping how we conduct research with clients who want to collaborate. In addition to validating our interventions and improving our artificial intelligence platform, we also seek to disseminate our research and add our voices to the debates in our respective fields.

Below are a few of the considerations that we encounter when we operationalize new research (with thanks to the ReOps community for their Eight Pillars of User Research, on which these eight questions are based).

Why do research at all?

The first question to answer is why to do the research at all. There are many goals of research, for example to determine feasibility, acceptability, efficacy, or effectiveness. Other goals, such as demonstrating return on investment, need not be bona fide research but could instead be accomplished during a program evaluation. The needs of key stakeholders drive the need for research, and the question of why drives the type and design of the research.

How and when does research happen?

Feasibility and acceptability studies occur early on in intervention development.

Feasibility: can Precision Nudging interventions be easily implemented? (Yes.)

Acceptability: are Precision Nudging interventions acceptable to those who receive it? (We are in the process of publishing survey studies suggesting that yes, these interventions are acceptable).

When an intervention is already developed, enter effectiveness studies. Effectiveness studies examine interventions under circumstances that more closely approach real-world practice. Effectiveness: does Precision Nudging work in the real world, better than a standard of care control? (We are running a Randomized Controlled Trial with Rochester Regional Health to find out).

Then come the usefulness studies, which may or may not be conducted formally. Usefulness: What is the economic impact of implementing Precision Nudging? (We developed a model to prospectively estimate the economic impact of Precision Nudging).

Who does the research?

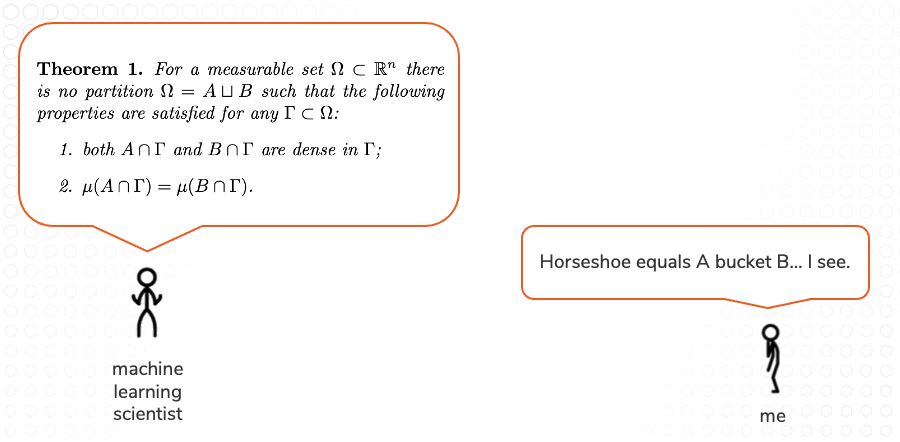

At Lirio we set up a lab, the Behavioral Reinforcement Learning Lab (BReLL). Our purpose is to “re-shape the current understanding of how behavior change and artificial intelligence come together to address some of the most stubbornly persistent challenges in healthcare.” We conduct varied projects and have to decide who leads them. Late last year our machine learning colleagues approached us with an idea ahead of one of their biggest conferences of the year. Our initial conversations looked something like this:

A joint project led by one of our behavioral scientists will necessarily look different from a joint project led by one of our machine learning scientists. (The final project led by our machine learning colleague looked like this).

What are the constraints?

Not only do scientists of different disciplines speak different languages, but they are also beholden to different constraints. One example is intellectual property. Often, machine learning algorithms fail to gain patent protection because their invention is deemed by law to be an abstract idea. Publishing your machine learning algorithms means you can prove you did it first. But publishing it means it is now publicly available, so there is naturally a hesitation to do so. Meanwhile, behavioral scientists are driven to publish, and have to temper expectations in joint collaborations. Other constraints include deadlines, budget, market forces, and organizational maturity.

Who manages participants, incentives, scheduling, and logistics?

When you receive 17,090 unsolicited text message replies to your COVID-19 vaccination Precision Nudging intervention, who is in charge of reading them? (I was).

Is there a centralized team of one or more who manage the research, or does each project have its own manager? Our centralized research operations manage most interdisciplinary research projects originating in the Behavioral Reinforcement Learning Lab

What happens to the findings, data, and insights?

The collaborative research conducted through the Behavioral Reinforcement Learning Lab improves Lirio’s artificial intelligence platform and the Precision Nudging interventions that rely on it. Internal sharing of results and findings enable us to use our own evidence-base in the design and development of new Precision Nudging interventions. External sharing of results and findings enable us to share our learnings with others, receive learnings in return, and in the process build broader and more generalizable support for the use of Precision Nudging.

What are legal and ethical considerations?

IRB, RCA, DSA, BAA, HSR, COI, HIPAA, and other TLA. These acronyms may be familiar to you, but depending on their fields, these acronyms might not be familiar to your collaborators. In an interdisciplinary research lab, we have to accommodate the strictest denominator. For us, for example, that means enabling machine learning scientists to undergo human subjects research ethics training, whether or not they ever touch raw human data.

What systems and tools do you need?

Figuring out what tools you actually use will take time. I prefer to satisfice when it comes to tooling (research suggests satisficers are happier with their choices too). We partner with CloudResearch to administer Qualtrics surveys via Amazon MTurk. Many other tools and vendors do the same, and similar things. The key is finding something you will actually use.

What other questions might one ask, that maybe I missed? How might this list change over time? I am curious to find out.